The Soap Opera Effect

- Leave a Comment

- Shortlink

- 8 min

A local bar in Long Island City was showing the telecast of the Grammy Awards last night. From where I was sitting, you could see it on two TV sets, each set at two different refresh rates. If I turned to my right, the video looked relatively normal, as it was set at 60 Hz. But if I turned to my left, the video looked like a soap opera. It was set to 120 Hz, double the frame rate of the first.

“Transferring from one frame rate (30 Hz) to another (24 fps) causes some distortion in this kinescope of Ben Grauer (1949) by National Broadcasting Company. Wikimedia Commons.

The subject in the foreground of the image, such as your telegenic host, appears to move out of sync from the background. Some people might like that effect as it allows the figure in the foreground “pop” from the background, but it is a far cry from the creative blur and shallow depth-of-field that we like in still photography. Instead of looking professional, it looks cheap.

Motion Interpolation

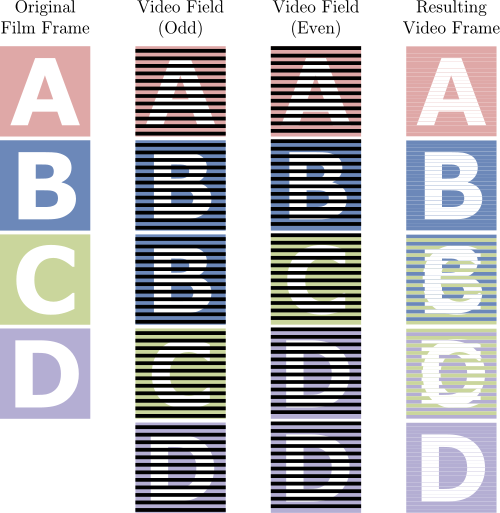

There’s a technical reason for the “soap opera effect.” The refresh rates of the TV signal and the TV set don’t match. In the case of last night’s Grammy Awards telecast, CBS was broadcasting the signal at 1080i/60. That means it has a resolution 1080 lines. The “i” means that the video signal is interlaced, how the television “draws” the picture. It first draws the odd lines (e.g., the first, third, and fifth lines all the way through the 1079th line.), and then it then draws the even lines (i.e., second, fourth, six, all the way through the 1080th line.), and so forth.

The “60” refers to the signal refresh rate: it refreshes 60 times per second. Historically, it is 60 due to the “refresh” rate of alternating current in the United States (60 cycles per second). To draw a full picture, it requires two fields: one odd and one even. Thus a 60 Hz interlaced signal is equivalent to 30 video frames per second.

The television to my right was set to 60 Hz. It was taking the broadcast signal and basically passing it on to the display without any significant processing[1]. The television on the left, on the other hand, was set to refresh the screen 120 times per second. One way to make that work would be to take the 60 Hz screen and double it by drawing each frame twice, but that would be wasting a key feature of the TV set. Instead, most high frame-rate TV sets will draw intermediate fields to approximate the look of motion captured at 120 Hz. Since the signal contains only 60 fields, the processor in the TV “guesses” what the intermediate field should be. Perhaps, a processor with the proper algorithm could make the fields look “natural,” but I’ve yet to see do so. Consequently, when I can, I disable the high refresh mode on any television set I watch and have access to the remote control.

Adapting a Faster Refresh Rate

One possible solution would be increase the frame rate of the signal, increasing it to 120 Hz. While older sets could just drop every other frame to display the signal at 60 Hz, that doesn’t seem feasible as it would require new cameras to record at 120 Hz, higher bandwidth to broadcast the signal, and reconfiguring a ton of video hardware. But this is not the first time we’ve dealt with differing frame rates. In fact, we’ve done this for almost 90 years since the coming of sound. Here are some common frame rates for film and video.

| Medium | Resolution | Frames/fields per second | Application |

|---|---|---|---|

| silent film | N/A | usually 16 frames | movies before 1927 |

| sound film | N/A | 24 frames | movies after 1927 |

| NTSC video | 525 lines | 30 frames, interlaced | TV in North America and where alternating current is 60 Hz |

| PAL video | 576 lines | 25 frames, interlaced | TV in Europe and where alternating current is 50 Hz |

| HD 720p | 720 lines | 60 fields | high-definition broadcast, low-bandwidth streaming broadband |

| HD 1080i | 720 lines | 60 fields | high-definition broadcast |

| HD 1080p | 1080 lines | 60 fields | high-definition broadband, Blu-ray |

You’ve probably watched all of these types of content on a video screen at one point or another, and it’s probably not looked as bad as what I see when I look at a 120 Hz television set. The fact that we’ve been able to watch movies on television for over 60 years without discussing a frame rate issue is quite impressive. While battling insomnia last night, I tried to figure out how film, at 24 frames per second, was adapted to television, at 30 frames per second. As I tossed and turned, I remembered something from the closing credits of many TV shows.

Telecine

Posed with the challenge of transferring film at 24 frames per second to video at 30 per second, you can probably imagine a few solutions. Allow me to eliminate some of them with the following requirements.

- You can’t drop or add any frames because the result would look jittery.

- You can’t speed up or slow down the film because it would shorten the length of the film and change the pitch of the audio.

How do you do it?

The short answer is run it through a telecine machine. While that explains what to do, it doesn’t explain how it works. The best description of a telecine explains that the solution requires both interlacing and electronically blending the frames. That allows you to maintain each film frame and the total running time of the original film.

The most common approach is known as a 2:3 pulldown. This process involves recording every four film frames on five video frames (or ten video fields). Below is a graphic from Wikimedia that illustrates the process.

Each film frame is captured at least twice on a two or more fields. The first field, for example, captures the odd field of the first film frame. The second field captures the even field of the first film frame. The third and fourth fields do the same to the second film frame, but the fifth field doubles back and captures the odd field of the second frame. The sixth field then captures the even field of the third film frame.

This table might do a better job at outlining the process of capturing four film frames in ten fields.

| Video Field | Film Frame | Odd or Even | Result |

|---|---|---|---|

| 1 | 1 | odd | TV Frame 1 |

| 2 | 1 | even | |

| 3 | 2 | odd | TV Frame 2 |

| 4 | 2 | even | |

| 5 | 2 | odd | TV Frame 3 |

| 6 | 3 | even | |

| 7 | 3 | odd | TV Frame 4 |

| 8 | 4 | even | |

| 9 | 4 | odd | TV Frame 5 |

| 10 | 4 | even |

If you’re wondering if there’s something to this ratio, in fact, there is.

4:10 is equal to 24:60. There are 24 film frames in one second of a film as there are 60 video fields in a second of NTSC video.

It’s Better Not to Guess

Interlacing and blending probably wouldn’t work too well when the frame rates are doubled, such as displaying a 60 Hz signal on a 120 Hz display, but it does suggest alternatives to interpolating intermediate frames. The reason the telecine worked so well, other than jagged interlaced artifacts that can be easily smoothed out, is because it didn’t “guess” what was in the image. It used the image itself.

- “Insignificant processing” might include color saturation, contrast, tint, and all those settings we’ve seen on TV sets for over 60 years. ↩